The integration of high-performance artificial intelligence into the core fabric of wireless connectivity is no longer a speculative project confined to research laboratories but a fundamental restructuring of how the global telecommunications grid operates. This transition, collectively known as AI-RAN, represents a departure from the rigid hardware constraints of the past, opting instead for a software-defined future where radio processing and machine learning algorithms coexist on universal computing platforms. As mobile network operators face the dual pressure of stagnant revenues and rising data demands, the shift toward intelligent, general-purpose silicon provides a promising pathway to reclaim operational efficiency. This review examines the architectural pivot from specialized circuitry to the dynamic world of AI-native infrastructure, evaluating how this evolution alters the competitive landscape of the telecommunications industry.

Introduction to AI-RAN and the Shift Toward General-Purpose Silicon

At its core, AI-RAN technology is defined by the infusion of neural network processing directly into the Radio Access Network, a domain traditionally reserved for fixed-function hardware. By leveraging artificial intelligence to manage complex tasks such as signal interference mitigation and dynamic resource allocation, operators can achieve levels of spectral efficiency that were previously unattainable. The fundamental principle here is the replacement of static radio algorithms with adaptive models that learn from real-world environmental conditions, allowing the network to “self-optimize” in real time. This capability is not merely an incremental update; it is a total reimagining of the radio stack, moving away from “best-effort” delivery toward a high-precision, intelligent interface.

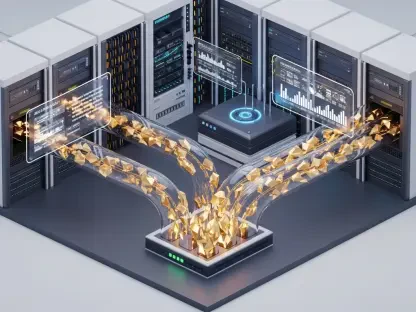

The physical manifestation of this shift is found in the hardware transition from Application-Specific Integrated Circuits (ASICs) to general-purpose chipsets. For decades, the RAN market relied on custom-designed ASICs that performed specific radio functions with extreme energy efficiency but zero flexibility. However, the sheer volume of research and development currently pouring into the global AI sector has shifted the economic balance. Modern telecommunications infrastructure is now beginning to reflect the architecture of hyperscale data centers, utilizing the same massive computational power that fuels generative AI to handle the heavy lifting of mobile data transmission. This convergence of IT and telecom brings the agility of cloud computing to the very edge of the wireless network.

Core Hardware Architectures and Technological Components

GPU-Accelerated Radio Processing and the Nvidia Ecosystem

The utilization of Graphics Processing Units (GPUs) for Layer 1 radio processing marks a significant departure from conventional network design, primarily due to the parallel processing capabilities inherent in GPU architectures. While traditional processors handle tasks sequentially, GPUs are designed to manage thousands of simultaneous operations, making them ideal for the math-heavy requirements of signal modulation and beamforming. Nvidia has emerged as a central figure in this movement, positioning its architecture not just as a tool for graphics, but as a comprehensive platform for the modern software-defined radio. This ecosystem allows developers to utilize high-performance AI algorithms to manage radio traffic with a level of granularity that legacy systems simply cannot match.

A key development within this space is the introduction of “slimline” GPU cards specifically tailored for the power and space constraints of mobile base stations. Through the Nokia-Nvidia alliance, these accelerators are being integrated into standard carrier-grade chassis, effectively turning a cell site into a localized data center. These cards allow the network to perform resource-intensive AI inference without the latency of sending data to a central cloud, which is critical for the low-latency requirements of 5G Advanced. By repurposing the same silicon used in massive AI training clusters for local radio processing, vendors are successfully bridging the gap between high-performance computing and terrestrial telecommunications.

CPU-Centric Virtualized RAN via Intel Architecture

In contrast to the GPU-heavy approach, many operators are exploring a CPU-centric model that utilizes high-performance Central Processing Units, such as Intel’s “Granite Rapids” family, to support Cloud RAN environments. This architecture focuses on the versatility of the x86 instruction set, aiming to handle both traditional network functions and AI-enhanced tasks on a single, unified processor. The primary advantage of this method is the simplification of the hardware stack; by avoiding the need for dedicated accelerators, operators can potentially reduce the complexity of their supply chains and lower the overall power consumption of the site. Intel has focused heavily on optimizing these CPUs to handle the specific timing and synchronization demands of radio software, which has historically been a weakness of general-purpose processors.

From a technical standpoint, the success of a CPU-centric RAN depends on the processor’s ability to maintain high throughput while executing AI workloads in parallel with standard radio traffic. Tier 1 operators have begun reporting real-world usage scenarios where a single high-performance server can manage the traffic of multiple cell sectors, a task that once required a rack of specialized equipment. This consolidation is a direct result of advancements in silicon lithography and instruction set extensions designed specifically for signal processing. While a CPU may not match the raw parallel throughput of a GPU, its efficiency in handling diverse, non-radio tasks makes it a compelling option for operators who prioritize a balanced, multi-functional network edge.

Economic Trends and Industry Evolution in Network Design

The transition to AI-RAN is driven as much by economic necessity as it is by technical innovation. The traditional RAN market has faced a notable decline, with global spending on specialized radio equipment dropping significantly as the initial wave of 5G build-outs concludes. This stagnation has created a vacuum that general-purpose AI chipmakers are eager to fill. By aligning telecommunications infrastructure with the broader silicon market, operators can benefit from the economies of scale that exist in the enterprise data center world. Investing in general-purpose silicon allows a telco to leverage a global supply chain that produces millions of chips, rather than relying on the niche, low-volume production runs associated with custom telecommunications ASICs.

This industry shift is fundamentally altering the procurement strategies of global telecommunications giants, who are increasingly moving toward software-defined networking. Instead of buying a “black box” solution from a single vendor, operators are looking for decoupled systems where software from one provider can run on hardware from another. This strategy is designed to break the cycle of high-margin, proprietary hardware sales that have dominated the industry for decades. As the boundaries between the data center and the radio site continue to blur, the power balance is shifting from traditional telecom equipment manufacturers toward the silicon giants who provide the underlying computational power for the AI age.

Real-World Applications and Deployment Strategies

In practical terms, AI-RAN is already being utilized to solve some of the most persistent challenges in wireless networking, particularly in the optimization of beamforming and spectral efficiency. In 5G Advanced deployments, AI models are used to predict the movement of users and adjust the direction of radio signals with microsecond precision, ensuring that signal strength is maximized while interference is minimized. This level of optimization is particularly valuable in dense urban environments where traditional static radio algorithms often struggle with signal reflections and high user density. By replacing these rigid formulas with dynamic machine learning models, operators can squeeze more capacity out of their existing spectrum holdings.

Strategic trials by major players like Orange have demonstrated the viability of these concepts in live network environments. These deployments often focus on Cloud RAN as a primary option, integrating AI-driven processing into large-scale requests for proposals to ensure that the infrastructure is ready for future software updates. There are even unique use cases where AI is tasked with managing complex network traffic patterns, such as identifying and prioritizing critical data flows during periods of extreme congestion. These real-world applications show that the technology is moving past the experimental phase and becoming a standard component of the modern network toolkit, providing a tangible return on investment through improved user experiences and reduced operational overhead.

Technical Challenges and Market Obstacles

Despite the clear benefits, the path to widespread AI-RAN adoption is fraught with technical and strategic hurdles, most notably the risk of vendor lock-in. While the industry has pushed for open standards, platforms like Nvidia’s CUDA remain largely proprietary, meaning that software written for these GPUs cannot easily be ported to other hardware. This creates a dilemma for operators who wish to maintain hardware-software decoupling; they may find themselves tethered to a specific silicon provider to maintain the performance gains offered by AI-accelerated radio stacks. Balancing the desire for high-performance AI with the need for an open, competitive vendor ecosystem remains one of the most significant challenges for the next few years.

Moreover, the technical trade-offs between different hardware architectures are significant. While GPUs offer immense power for AI tasks, they also come with higher thermal and energy demands, which can be difficult to manage in small, localized cell sites. Conversely, while standard CPUs are more energy-efficient and easier to integrate into existing cooling systems, they may lack the raw performance needed for the most intensive AI-RAN applications. Beyond the hardware itself, the financial stability of key players in the semiconductor market and the regulatory hurdles associated with high-tech supply chains add layers of complexity to long-term infrastructure planning. Maintaining a truly hardware-agnostic environment while utilizing specialized AI performance is a difficult tightrope walk for even the most advanced operators.

Future Trajectory: The Path to 6G and Beyond

Looking toward the end of the decade, the evolution of AI-RAN is expected to reach its full potential during the anticipated 6G refresh around 2030. In this future landscape, the integration of AI will likely move beyond Layer 1 processing to encompass the entire radio software stack, creating a fully autonomous network capable of real-time self-healing and predictive maintenance. This total integration would allow the network to anticipate traffic spikes and reconfigure its physical and logical resources before the user ever experiences a slowdown. The move toward general-purpose AI infrastructure will likely be complete by this point, with 6G being designed from the ground up to be “AI-native” rather than having AI features added as an afterthought.

The long-term impact of this shift will likely redefine the competitive landscape of the telecommunications sector. As traditional vendors increasingly pivot to become software-focused entities, the silicon giants will take a more direct role in shaping network standards. We may see a future where the distinction between a mobile operator and a cloud service provider disappears almost entirely, as both rely on the same fundamental AI-driven infrastructure to deliver services. This convergence will foster a new era of innovation, where the speed of network upgrades is limited only by the pace of software development, rather than the multi-year cycles of hardware manufacturing.

Summary of Findings and Strategic Assessment

The assessment of AI-RAN infrastructure revealed a clear industry consensus regarding the economic necessity of moving away from custom, purpose-built silicon. By analyzing the performance of both GPU-accelerated and CPU-centric models, the review found that the choice of hardware depended largely on an operator’s specific priorities regarding raw AI performance versus power efficiency and openness. It was observed that while GPUs provided a substantial leap in spectral efficiency through advanced radio algorithms, the proprietary nature of their software ecosystems presented a lingering risk of vendor dependency. In contrast, CPU-based models demonstrated a more balanced approach, offering sufficient performance for current Cloud RAN needs while maintaining the flexibility and stability required by global carriers.

The evidence suggested that the shift toward general-purpose silicon was an irreversible trend driven by the overwhelming R&D investments in the broader AI sector. This transition successfully repositioned the Radio Access Network as a dynamic, software-defined asset rather than a collection of static hardware components. As the industry prepared for the upcoming 6G era, the foundational work done in AI-RAN provided the architectural flexibility needed to support future innovations. Ultimately, the successful deployment of these technologies required a strategic balance between high-performance computing and the practical constraints of terrestrial mobile sites. The move toward an AI-native network architecture was confirmed as the primary driver for telecommunications growth in the coming decade.