The vision of autonomous robots gracefully weaving through bustling city sidewalks often encounters a staggering economic reality: a $500 delivery bot quickly transforms into a $50,000 liability when it is forced to carry its own supercomputer. This massive price disparity marks a pivotal shift in the evolution of automation, where the intelligence of the machine is being physically severed from its mechanical frame. Instead of packing expensive, power-hungry processors into every individual hardware unit, the robotics industry is turning toward high-speed connectivity as a lifeline. The success of the next generation of humanoid workers and autonomous fleets now depends less on the silicon chips inside them and more on the invisible signals pulsing around them.

As we stand in 2026, the shift toward Physical AI represents a fundamental change in how a machine’s “mind” is defined and deployed. Transitioning these devices from specialized laboratory prototypes to ubiquitous daily tools requires overcoming a model of on-device processing that has reached both an economic and physical ceiling. The industry is currently wrestling with the reality that if robots are to be affordable and efficient, their cognitive functions must be distributed across a network. This transition is not merely an engineering preference but a commercial mandate for the survival of the robotics sector.

The Economic and Technical Mandate for Network-Based Intelligence

The survival of the robotics industry hinges on the cost-performance paradox, which dictates that offloading “thinking” to the network is the only viable method to keep consumer hardware prices below the $2,000 threshold. When a machine handles its own complex inference, it requires high-end compute engines that drive up manufacturing costs and retail prices simultaneously. By migrating these cognitive processes to the cloud, manufacturers can strip away the bulk of the internal hardware, allowing for lighter, more agile, and significantly cheaper robotic frames that remain accessible to the mass market.

Furthermore, the power constraint remains a formidable barrier to localized intelligence. Sophisticated AI models demand immense amounts of energy, which creates a punishing trade-off between a robot’s intelligence and its battery life. A machine that processes every decision locally will spend more time at a charging station than performing its duties. Moreover, the rise of Physical AI is triggering an “uplink revolution,” reversing traditional data flow patterns. Unlike the previous decade’s focus on pushing high-definition video to consumers, the current era requires networks to ingest massive volumes of sensor data from millions of devices simultaneously.

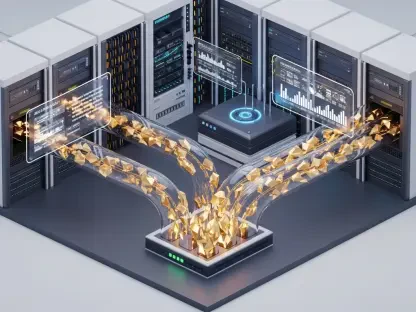

Mapping the Architecture of Distributed Intelligence

The “brain” of a future robot will not exist in a single location but will be spread across a tiered hierarchy designed to balance speed, power, and security. Safety-critical “reflexes” must remain on-device to handle microsecond responses, ensuring that a robot can stop or swerve instantly to prevent a collision even if the network fluctuates. This local layer acts as the biological equivalent of a spinal cord, managing immediate survival instincts while leaving higher-order reasoning to more powerful, distant processors.

Moving one step out, the 5G tower edge serves as the primary site for millisecond-level decision-making. These localized cell sites provide the computational muscle that a robot simply cannot carry, allowing for real-time navigation and environmental interaction without the lag associated with distant servers. In industrial settings, enterprise and private racks are becoming the standard, using private 5G to keep proprietary operational data secure within factory walls. Finally, centralized deep learning centers handle generative AI tasks where response times are measured in seconds, focusing on long-term planning and complex problem-solving rather than immediate physical movement.

The Reliability Gap and the Shadow of Past Failures

Telecommunications companies face a steep climb to prove they can act as AI brains rather than just data pipes. They must overcome significant technical skepticism rooted in a history of unfulfilled promises regarding low-latency connectivity. The industry is now moving beyond “best-effort” connectivity toward a model of guaranteed-performance networks. In this new paradigm, a dropped signal is no longer a mere inconvenience; it is a potential catastrophe that could lead to physical damage or injury in the real world, necessitating a level of network resilience never before seen in consumer markets.

This transition also brings the “MEC Ghost” back into the spotlight, reminding operators of the early 2010s Mobile Edge Computing era that failed to generate substantial revenue. The current AI boom offers a second chance, yet the hyperscaler threat remains a dominant concern. Companies like Amazon, Google, and Microsoft are aggressively positioning themselves to provide the intelligence at the edge, potentially relegating telcos to the role of landlords who simply rent out space at their tower sites. Whether network providers can capture the value of the intelligence itself or remain utility providers is the defining question of the decade.

Strategic Frameworks for a Connected AI Ecosystem

To prepare for the predicted capacity crunch in 2029, a fundamental realignment of infrastructure and business models is required. Operators must solve the uplink bottleneck to manage the 20% to 25% annual growth in data being sent from machines to the network. This involves deploying new spectrum and optimizing signal processing specifically for the massive influx of sensory data. Success requires telcos to move beyond simple connectivity and start providing “Inference-as-a-Service,” where the network itself performs the calculations required for the robot to function.

Looking toward the 2035 horizon, the goal is to achieve economically justified compute resources at every cell tower globally. This roadmap involves integrating deeply into the value chain of robotics and automation to ensure that the network is as indispensable as the hardware. By establishing a seamless bridge between silicon and steel, the telecommunications industry could finally realize its potential as the nervous system of a fully automated world. However, achieving this required a departure from traditional business models in favor of a more integrated, high-stakes partnership with the physical AI sector.

The transition toward network-based intelligence demanded that telecommunications providers evolved into high-reliability compute partners. This shift necessitated a complete overhaul of infrastructure to support the massive uplink requirements of autonomous fleets. Industry leaders moved to secure their place in the value chain by offering specialized AI processing layers rather than just raw bandwidth. By addressing the latency and reliability gaps, the sector laid the groundwork for a future where the intelligence of a machine was no longer limited by what it could carry. This transformation redefined the role of the mobile network, turning it into the essential cognitive foundation for the global robotics economy.