The relentless expansion of artificial intelligence has pushed traditional data center architectures to their absolute breaking point, forcing a radical reimagining of how global telecommunications networks function as cohesive, high-performance computing systems. This evolution reflects a strategic shift where the industry moves away from centralized silos toward a model of distributed intelligence. By integrating AI demands directly into the network fabric, providers are no longer just carriers of data; they are the architects of the computational environment itself.

Evolution of AI-Centric Networking and the Role of Telcos

Historically, telecommunications strategies focused on simple connectivity, but the current landscape demands a more sophisticated approach. The surge in AI processing requirements is transforming wide area networks into distributed data centers. This change is driven by the need to process vast amounts of data closer to the source, reducing the physical distance information must travel to reach a processing unit.

Core Pillars of the AI Network Fabric

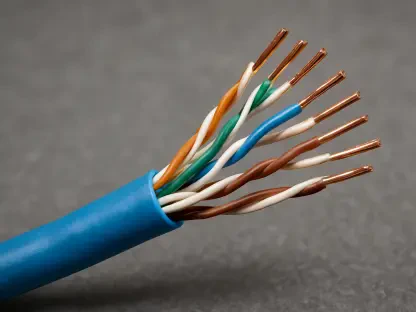

Optical Fiber and High-Speed Switching

Hardware remains the foundation of this digital transformation, with high-capacity optics and switching performance acting as the critical backbone. These components allow for the massive throughput necessary for training large models, ensuring that data flows without bottlenecks. This infrastructure provides the physical stability required to sustain the intense, unrelenting pressure of modern algorithmic workloads.

GPU-to-GPU Connectivity and Metcalfe’s Law

The value of these networks follows a modified version of Metcalfe’s Law, where the power of the system grows exponentially with every additional GPU connection. This necessitates a breathable and extensible fabric that can scale fluidly. Unlike traditional client-server models, AI requires a mesh-like interconnectivity that allows thousands of processors to function as a single unit.

Core IP Routing and Latency Management

Modern core routing addresses the trilemma of speed, latency, and power consumption through integrated chipsets. By minimizing the time it takes for a packet to traverse the network, these routers ensure that synchronization between GPUs is maintained. This technical precision is vital for avoiding the dead time that often plagues less optimized infrastructure.

Emerging Trends in Distributed Data Center Architecture

Innovation is currently leaning toward viewing the entire global network as one massive, singular data center. Recent developments in the integration of chipsets and optics are influencing the trajectory toward significantly higher energy efficiency. This trend suggests that the boundary between the cloud and the network is effectively disappearing.

Real-World Applications and Industrial Deployment

Operators like Indosat Ooredoo Hutchison are already demonstrating the practical viability of these systems. By acting as primary gatekeepers for GPU-heavy tasks, these telcos are providing specialized environments for industries ranging from healthcare to finance. These deployments prove that the infrastructure can handle real-world complexities while maintaining high availability.

Technical and Market Adoption Challenges

Despite the promise, the high capital expenditure required for these upgrades remains a significant hurdle. Managing rollercoaster traffic demands, where peaks are unpredictable and massive, requires a level of agility that many legacy systems lack. Ongoing efforts focus on improving the return on investment by speeding up the deployment of hardware and refining resource allocation.

The Future Landscape of AI-Powered Infrastructure

The coming years will likely see telcos fully transition from utility providers to essential high-tech partners. Breakthroughs in network automation will allow these systems to self-optimize in real-time based on the specific needs of the AI models they support. This symbiotic relationship will define the next decade of global technological progress.

Strategic Summary and Assessment

The review of this infrastructure revealed that physical hardware was the undeniable cornerstone of the AI revolution. It was clear that without the specific advancements in optical and routing technology, the potential of large-scale intelligence would have remained untapped. This shift effectively restored the prestige of the telecommunications industry, positioning it at the very center of the technological frontier for the foreseeable future.