As the world moves from experimental IoT pilots to massive, global deployments, the underlying telecommunications infrastructure is facing a reckoning. Vladislav Zaimov, a seasoned expert in enterprise telecommunications and network risk management, stands at the forefront of this shift, advocating for a fundamental change in how we connect millions of constrained devices. In this conversation, Zaimov explores why the traditional telecom model is failing the IoT industry and how a programmable, hardware-centric approach to connectivity is the only way to overcome the physical bottlenecks of large-scale rollouts. We delve into the necessity of moving beyond the hype of 5G to focus on the robust, low-power reliability that defines successful global infrastructure.

When scaling IoT from thousands to millions of units, why is it inaccurate to treat these deployments like software products? What specific physical limitations should engineers prioritize during the design phase to avoid hardware-level bottlenecks once devices are in the field?

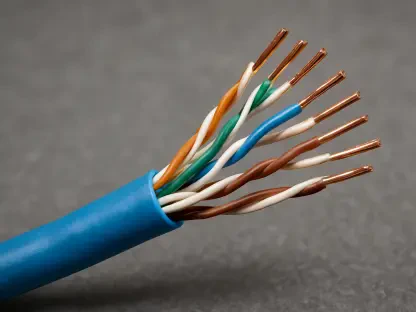

When we talk about moving from a few thousand units to a global fleet of millions, we must acknowledge that IoT scales like hardware, not software. You might be able to push a firmware patch to fix a minor security flaw, but you cannot physically swap a radio module or a sensor once it is buried underground or moving across a continent. In my experience, engineers often get caught in the trap of treating these devices like smartphones, but in reality, they are basic hardware components like smart meters, asset trackers, or e-scooters. If you do not account for these physical constraints and the immutability of the hardware during the initial design phase, you end up stuck with an inflexible system that cannot survive the long lifecycle these devices are expected to maintain. The frustration of a “bricked” device in a remote location is a visceral reminder that hardware limitations are the true ceiling of any IoT ambition.

Hardware and cloud components are often immutable once deployed, making the connectivity layer the only flexible variable. How can making this layer programmable turn a basic sensor into a dynamic application, and what technical steps are necessary to achieve that transparency?

The standard IoT architecture consists of three pillars: hardware, connectivity, and the cloud, but the hardware and cloud are often quite rigid once the deployment goes live. By making the connectivity layer programmable and transparent, we can effectively bridge the gap, allowing a simple sensor to behave with the agility of a high-level application. This requires moving away from opaque, fragmented frameworks and building a system where the network itself is aware of the device’s specific constraints and can adapt on-the-fly. When this layer is truly programmable, you no longer have to redesign your entire device just to switch networks or adapt to new regional requirements. It feels less like managing a telecom contract and more like writing code, where the network serves the application’s needs rather than the other way around.

Many connectivity providers focus on reselling data plans rather than solving the underlying logistical hurdles of global deployment. How does this legacy model restrict innovation, and what specific infrastructure changes are required to support millions of devices without constant redesigns?

The traditional model where mobile virtual network operators simply acts as a middleman reselling data plans is a relic of the past that actively stifles modern innovation. Most companies building connected products have zero interest in becoming telecom experts or negotiating complex carrier contracts; they just want their data to move securely and reliably across borders. To support millions of devices, we need a shift toward infrastructure partners who provide a unified global framework rather than a collection of fragmented SIM cards and regional data buckets. This change in infrastructure allows for a simplified procurement process, meaning a company can focus on its core business rather than the logistical nightmare of managing millions of disparate connections. It is about building a reliable foundation that permits seamless interaction worldwide, removing the friction that currently keeps cellular IoT trapped in a cycle of complexity.

Since many devices like smart meters or trackers transmit very small amounts of data, why is the industry focus on 5G or 6G speeds often misplaced? What connectivity qualities are actually more vital than raw bandwidth for these low-power, constrained devices?

There is a significant amount of hype surrounding 5G and 6G, but for the vast majority of IoT applications, raw speed is almost entirely irrelevant and often a distraction. If you are operating a fleet of asset trackers or smart meters, you are often sending tiny packets of data that even an older 2G network can handle with absolute ease. The industry’s obsession with high bandwidth often ignores the real-world needs of low-power, constrained devices that require longevity and extreme reliability over massive throughput. What really matters is having a connectivity architecture that is robust and adaptable to the application’s power constraints, ensuring that a device can remain functional for years without a battery swap. We need to prioritize infrastructure that supports these “minimal data” use cases with the same intensity we currently apply to high-speed consumer cellular.

Organizations frequently get bogged down in the complexities of carrier contracts and regional network fragmentation. How can a company transition away from managing telecom infrastructure to focusing solely on business outcomes, and what metrics indicate a truly successful global rollout?

A company successfully transitions away from the “telecom headache” when connectivity becomes an invisible, trusted utility, much like electricity or water. Success in a global rollout isn’t measured by the number of SIM cards you have activated, but by the reliability, security, and ubiquity of the connection across every single unit in the field. When an organization can focus solely on the data and the business outcomes—like optimizing a supply chain or reducing energy waste—without worrying about regional network fragmentation, they have truly achieved scale. The ultimate metric is the total elimination of connectivity-related bottlenecks, allowing millions of devices to interact seamlessly regardless of where they are physically located. It is about shifting the perspective so that the cellular connectivity adapts to the application, clearing the path for genuine progress and operational peace of mind.

What is your forecast for the evolution of cellular IoT connectivity?

I believe we are entering an era where cellular connectivity will finally adapt to the application, rather than forcing the application to conform to outdated telecom standards. We will see the end of the “reseller” model as providers transform into true infrastructure partners that prioritize global reach and operational simplicity. This shift will finally unlock the potential for millions of devices to be deployed worldwide with the same ease we currently experience with software updates. Ultimately, the future of IoT lies in making the complex global network invisible to the end-user, ensuring that connectivity is as dynamic and programmable as the code it carries. This foundational change is the key to turning existing challenges into opportunities for massive growth and innovation across the entire ecosystem.