Real-time intelligent connectivity is now a baseline expectation across markets, yet most telecom networks were designed for a different era. This creates an operating gap: applications demand prediction, intent, and automation, while networks still reflect hardware-centric constraints. Cloud and AI together form the missing operating layer that closes this gap. The implications extend beyond efficiency and service quality—digital sovereignty and national competitiveness increasingly depend on operators delivering intelligent infrastructure at scale.

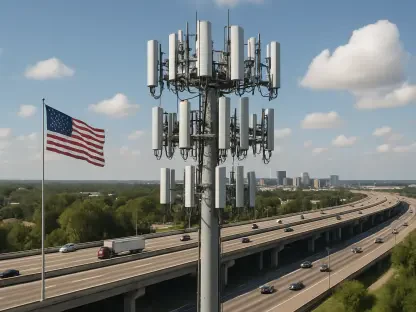

Global demand trends reinforce urgency. Mobile data traffic continued strong growth through 2024, driven by video, gaming, and enterprise IoT workloads. Meanwhile, 5G subscriptions surpassed 1.5 billion globally, raising expectations for low-latency, high-reliability services across consumer and industrial domains. Operators that treat cloud-AI convergence as a core operating system rather than experimental tooling will define the next decade.

The Infrastructure–Intelligence Gap

Traditional network design prioritizes throughput and availability. Modern workloads require more: networks that anticipate demand and adapt continuously. Ultra-reliable low-latency communications for autonomy target sub-10 millisecond end-to-end performance under load with deterministic reliability, not best-effort delivery. Meeting this requires predictive traffic modeling, dynamic routing, intent-based control, and proactive failover before degradation is visible. Static architectures and manual runbooks cannot operate effectively at a national scale.

A strategic layer also emerges. Reliance on external platforms for AI inference and model orchestration across critical infrastructure introduces sovereignty and resilience risks. Cloud-AI convergence enables operators to localize sensitive workloads while retaining elasticity and modern tooling. The choice is no longer public cloud versus telco cloud, but a policy-aware distributed fabric spanning both.

AICON: A Practical Model for Cloud-AI in Telecom

Scaling cloud-AI requires alignment between technology decisions and operational outcomes. The AICON framework provides a structured approach across five interdependent dimensions:

Infrastructure Elasticity. Transition from hardware-centric deployments to cloud-native network functions and service meshes in containers. Capacity scales in minutes rather than quarters.

Intelligence Integration. Embed machine learning across the stack for RAN congestion forecasting, core routing optimization, and real-time customer experience adaptation.

Data Fabric Unification. Consolidate telemetry, subscriber data, and operational logs into a governed, queryable fabric with a shared semantic layer for consistent metrics.

Governance Architecture. Define accountability for AI-driven decisions, including explainability, bias controls, and data residency enforced via policy-as-code.

Operational Autonomy. Progress from assisted analytics to self-optimizing systems under human oversight, automating repetitive operations while reserving judgment-driven decisions.

In practice, consider a regional operator during a major sports event. Elastic infrastructure scales containerized control-plane components dynamically. Forecasting models detect congestion hotspots in advance. A unified data fabric merges stadium IoT, mobility patterns, and network telemetry into a single operational view. Governance rules define automation thresholds and approval boundaries. Autonomous orchestration creates and tears down dedicated slices for broadcast, emergency services, and consumer traffic in real time. The same approach applies to smart city corridors where demand spikes unpredictably across mobility, safety, and IoT systems.

What Already Works in Production

Context-aware orchestration. Models trained on historical patterns and event signals enable predictive capacity allocation, reducing congestion and improving user experience during peak periods.

Conversational NOC assistants. Natural language interfaces replace complex command-line workflows, reducing operational friction and accelerating engineer productivity.

Federated learning. Edge-based training enables compliance with data residency constraints while sharing model updates instead of raw data, supporting fraud detection, traffic optimization, and anomaly detection across jurisdictions.

Architecture Choices That Determine Outcomes

Where workloads run: cloud, telco cloud, and edge

Hyperscale clouds provide elasticity and mature AI tooling. Telco clouds ensure locality, latency guarantees, and regulatory compliance. Edge infrastructure enables real-time inference near radios and enterprise environments. Effective architectures combine all three. Control planes typically run in cloud environments for agility. User-plane functions and latency-sensitive inference run at the edge. Sensitive datasets remain regionally constrained under policy enforcement.

Data fabric and semantic layer

Raw telemetry lacks operational value without structure. Operators require a unified data fabric that normalizes topology, performance counters, customer experience metrics, and logs into a consistent schema. A semantic layer translates technical metrics into operational and business terms, turning low-level counters into meaningful outcomes. Without this, models train on inconsistent definitions, and operational dashboards diverge.

RAN and Open RAN realities

Open RAN increases vendor flexibility and innovation potential but introduces integration complexity and multi-vendor performance tuning challenges. Energy efficiency is also critical, as radio access networks represent a significant and growing share of operating expenditure.

AI-driven capabilities such as adaptive sleep modes and beamforming optimization reduce energy per bit in production environments, though results vary by topology and spectrum mix. AI functions in the RAN should be treated as production-grade network elements with defined SLAs and measurable impact.

Security, Risk, and Compliance for AI-Native Networks

Zero trust must extend across both human and machine identities. Every component requires authentication and authorization boundaries. Supply chain transparency is essential, including software bills of materials for VNFs and CNFs. AI introduces new risks such as model poisoning, data drift exploitation, and prompt injection against operational assistants.

A model risk management function should govern data provenance, version control, bias testing, and rollback procedures. For multinational operations, policy-as-code must enforce data residency, access controls, and auditability for regulators.

KPIs That Matter

Effective cloud-AI transformation connects operational metrics with business outcomes:

Slice SLA compliance across latency, jitter, and throughput targets

Mean time to detect and recover, including automated remediation rates

Forecast accuracy at the cell and cluster levels and resulting capex efficiencyEnergy consumption per delivered terabyte by region and network type

First-contact resolution and reduction in field interventions

Revenue assurance gains from anomaly detection in billing and mediation systems

Policy and Spectrum Context

Regulatory alignment will shape adoption speed. The EU AI Act will define transparency, documentation, and risk requirements for high-impact telecom AI systems.

In the United States, spectrum policy, rural coverage programs, and research initiatives such as PAWR testbeds (including COSMOS and POWDER) continue to enable experimentation in Open RAN and edge-native systems.

Operators should engage early with regulators to define acceptable AI usage in network operations, data residency constraints, and auditability standards for automated decisions.

What to Avoid

Two recurring failure modes stand out. First, tool sprawl without a unified data and semantic layer leads to fragmented insights and inconsistent decisions. Second, automation without governance creates operational and regulatory risk, eventually forcing rollback of otherwise valuable systems. Both are avoidable with coherent architecture and embedded policy controls.

Conclusion

Cloud-AI in telecom changes how control functions in network systems. It goes beyond just virtualization and automation. This technology connects infrastructure operations, data analysis, and decision-making, which have evolved separately.

The main challenges in turning telecom’s digital investments into real ROI come from aligning systems, not from the AI itself. Problems like disconnected observability tools and vague metric definitions make it hard to predict outcomes and increase the need for manual interventions. Automation projects often fail without clear responsibility for machine actions.

Current strategies focus on important design choices: where computing happens, how to standardize operational data, and how control fits into governance. These choices affect whether AI can play a central role in operations.

This view matches broader insights about telecom transformation, showing that having a well-coordinated operation is essential for scaling smart networks.

In summary, successful adoption relies on consistent execution across systems instead of simply adding more automation layers.